AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

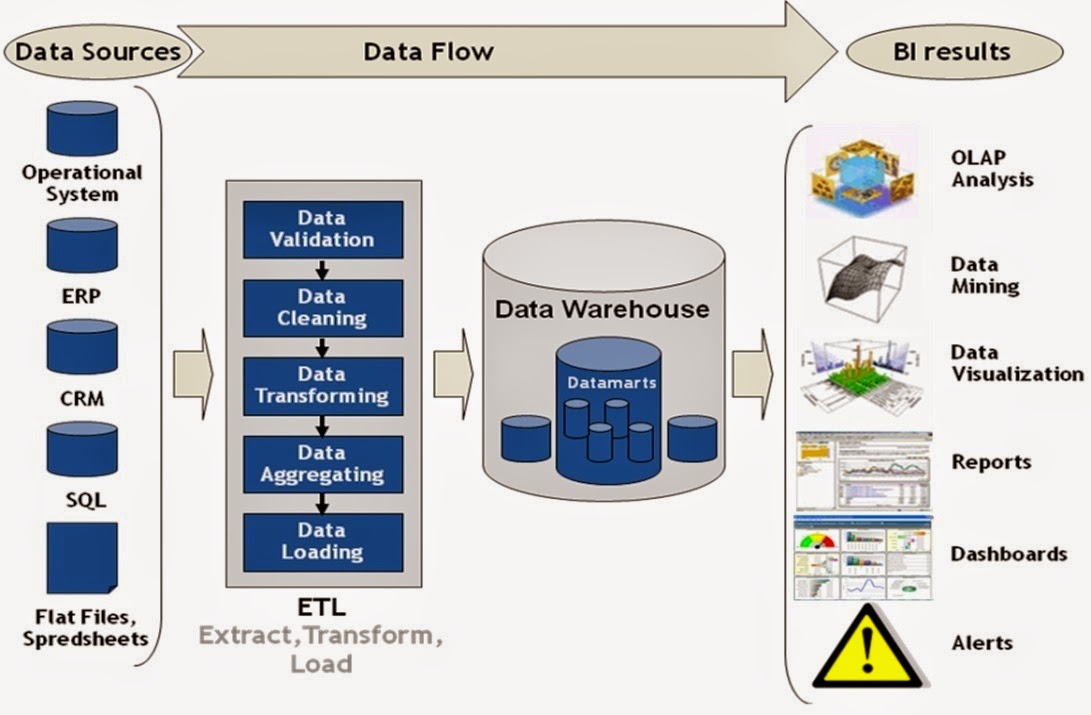

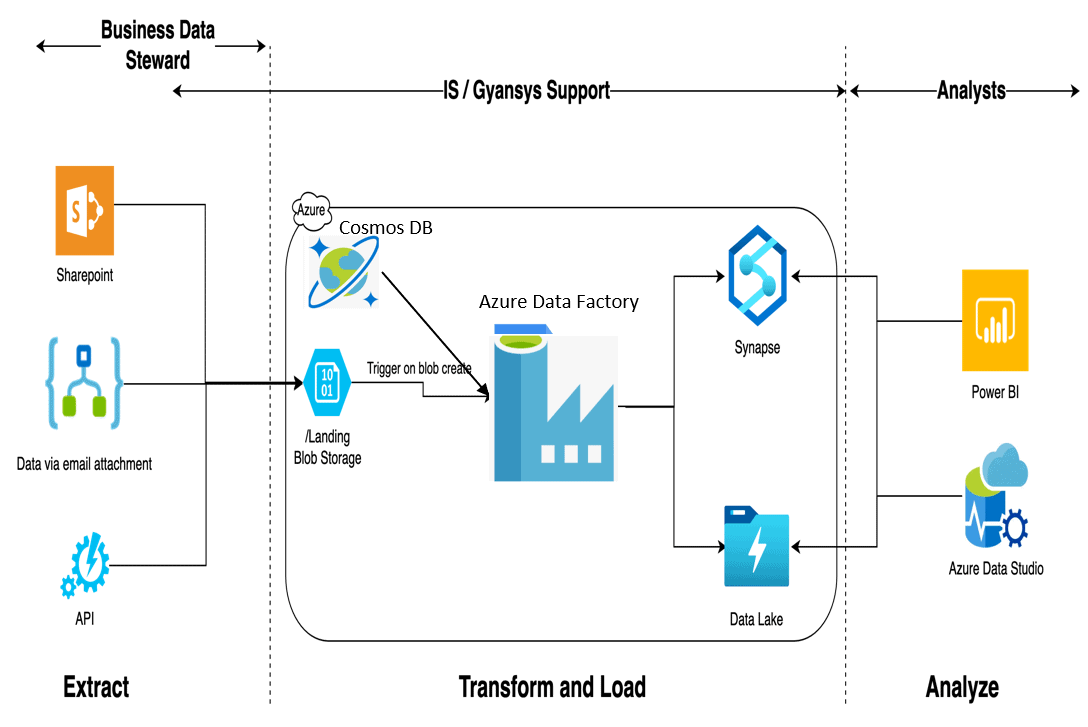

Transformation - the extracted data is transformed to match the structure of the data to what the warehouse expects In general, the data warehouse is characterized by strict structure and formatting of data so that it can be easily accessed and presented.Ī typical data warehouse pipeline consists of the following stages:Įxtraction - in this stage, data is extracted, or pulled, from the source systems The data warehouse architecture consists of a central data hub where data is stored and accessed by downstream analytics systems. Some of them are listed below, along with scenarios where they can be used in your data pipelines.

Over time, certain architectural patterns that have proven themselves useful for multiple applications have been given names and can be easily adapted by engineers while creating their own data pipelines. A good data pipeline architecture is flexible, so you can incorporate such changes with minimal impact.įree book: Fundamentals of Data EngineeringĮverything you need to build simple and efficient data pipelines.

It is almost guaranteed that the technologies and implementations of individual components will change. Any data pipeline must be architected with security in mind.īecause of the large scale and vast applications of a data pipeline, it is highly possible that the expectations from the pipeline change over time. Data pipelines have a large attack surface because of all the components involved in processing and transforming data that could be potentially dangerous if accessed by malicious entities. With the exponential increase in data available for processing, keeping it secure has become all the more important. It should be designed with fallback mechanisms in case of connectivity or component failure to ensure minimal or no data loss while recovering from such scenarios. The data pipeline is critical when downstream applications consume processed data in near real-time. In such cases, rather than over-provisioning the pipeline so that it can handle the higher load at all times, it is more cost-efficient and flexible to give the pipeline the ability to scale itself with increasing and decreasing data flow. It is possible that data flows vary over time, with large bursts in the number of events occurring during certain events (like festival sales for e-commerce websites, for example). This allows individual components to be updated or changed at will without affecting the rest of the pipeline. Data pipelines deal with large amounts of data, and some attributes below are essential for any component that processes data at scale.Įach component of the data pipeline should be independent, communicating through well-defined interfaces. Principles in data pipeline architecture designĪ data pipeline architect should keep some basic principles in mind while designing any data pipeline. Reliability, scalability, security, and flexibility are essential in architecture design.ĭata flows from diverse sources to central, structured data storage.ĭata flows from diverse sources to a central, unstructured data store.ĭata flows continuously through the pipeline in the form of small messages.ĭata flows from IoT devices to analytics systems in a continuous stream of time-stamped data. We also look at different factors that determine data pipeline design choices.Īrchitecture is a blueprint of a data pipeline, independent of the technologies used in its implementation.Įxpected behavior of each stage of the data pipeline from data source to downstream application.

This article explores data pipeline architecture principles and popular patterns for different use cases. Data flows through the cycle continuously and seamlessly over a long period. They can track the performance of each component and make controlled changes to any stage in the pipeline without impacting the interface with the previous and subsequent stages. Having a well-architected data pipeline allows data engineers to define, operate, and monitor the entire data engineering lifecycle. Each pipeline component performs one stage of the cycle and creates an output that is automatically passed to the next component.Ī data pipeline architecture is a blueprint of the pipeline independent of specific technologies or implementations. It facilitates continuous data processing for fast data consumption by applications like machine learning, BI tools, and real-time data analytics. A data pipeline is an automated implementation of the entire data engineering lifecycle.

The data engineering lifecycle defines the various stages data must go through to be useful-such as generation, ingestion, processing, storage, and consumption.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed